AWS Blogs

EBSCOlearning offers corporate learning and educational and career development products and services for businesses, educational institutions, and workforce development organizations. As a division of EBSCO Information Services, EBSCOlearning is committed to enhancing professional development and educational skills.

In this post, we illustrate how EBSCOlearning partnered with AWS Generative AI Innovation Center (GenAIIC) to use the power of generative AI in revolutionizing their learning assessment process. We explore the challenges faced in traditional question-answer (QA) generation and the innovative AI-driven solution developed to address them.

In the rapidly evolving landscape of education and professional development, the ability to effectively assess learners’ understanding of content is crucial. EBSCOlearning, a leader in the realm of online learning, recognized this need and embarked on an ambitious journey to transform their assessment creation process using cutting-edge generative AI technology.

Amazon Bedrock is a fully managed service that offers a choice of high-performing foundation models (FMs) from leading AI companies such as AI21 Labs, Anthropic, Cohere, Meta, Mistral AI, Stability AI, and Amazon through a single API, along with a broad set of capabilities to build generative AI applications with security, privacy, and responsible AI, and is well positioned to address these types of tasks.

EBSCOlearning’s learning paths—comprising videos, book summaries, and articles—form the backbone of a multitude of educational and professional development programs. However, the company faced a significant hurdle: creating high-quality, multiple-choice questions for these learning paths was a time-consuming and resource-intensive process.

Traditionally, subject matter experts (SMEs) would meticulously craft each question set to be relevant, accurate, and to align with learning objectives. Although this approach guaranteed quality, it was slow, expensive, and difficult to scale. As EBSCOlearning’s content library continues to grow, so does the need for a more efficient solution.

Recognizing the potential of AI to address this challenge, EBSCOlearning partnered with the GenAIIC to develop an AI-powered question generation system. The goal was ambitious: to create an automated solution that could produce high-quality, multiple-choice questions at scale, while adhering to strict guidelines on bias, safety, relevance, style, tone, meaningfulness, clarity, and diversity, equity, and inclusion (DEI). The QA pairs had to be grounded in the learning content and test different levels of understanding, such as recall, comprehension, and application of knowledge. Additionally, explanations were needed to justify why an answer was correct or incorrect.

The team faced several key challenges:

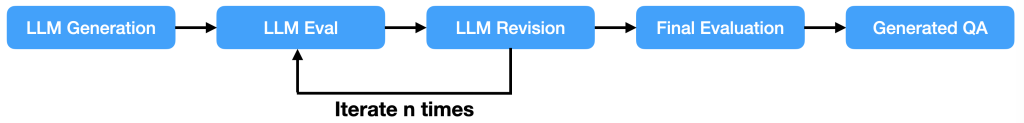

The GenAIIC team developed a sophisticated pipeline using the power of large language models (LLMs), specifically Anthropic’s Claude 3.5 Sonnet in Amazon Bedrock. This pipeline is illustrated in the following figure and consists of several key components: QA generation, multifaceted evaluation, and intelligent revision.

The process begins with the QA generation component. This module takes in the learning content—which could be a video transcript, book summary, or article—and generates an initial set of multiple-choice questions using in-context learning.

EBSCOlearning experts and GenAIIC scientists worked together to develop a sophisticated prompt engineering approach using Anthropic’s Claude 3.5 Sonnet model in Amazon Bedrock. To align with EBSCOlearning’s high standards, the prompt includes:

The system aims to generate up to seven questions for each piece of content, each with four answer choices including a correct answer, and detailed explanations for why each answer is correct or incorrect.

After the initial set of questions is generated, it undergoes a rigorous evaluation process. This multifaceted approach makes sure that the questions adhere to all quality standards and guidelines. The evaluation process includes three phases: LLM-based guideline evaluation, rule-based checks, and a final evaluation.

In collaboration with EBSCOlearning, the GenAIIC team manually developed a comprehensive set of evaluation guidelines covering fundamental requirements for multiple-choice questions, such as validity, accuracy, and relevance. Additionally, they incorporated EBSCOlearning’s specific standards for diversity, equity, inclusion, and belonging (DEIB), in addition to style and tone preferences. The AI system evaluates each question according to the established guidelines and generates a structured output that includes detailed reasoning along with a rating on a three-point scale, where 1 indicates invalid, 2 indicates partially valid, and 3 indicates valid. This rating is later used for revising the questions.

This process presented several significant challenges. The primary challenge was making sure that the AI model could effectively assess multiple complex guidelines simultaneously without overlooking any crucial aspects. This was particularly difficult because the evaluation needed to consider so many different factors—all while maintaining consistency across questions.

To overcome the challenge of LLMs potentially overlooking guidelines when presented with them all at one time, the evaluation process was split into smaller manageable tasks by getting the AI model to focus on fewer guidelines at a time or evaluating smaller chunks of questions in parallel. This way, each guideline receives focused attention, resulting in a more accurate and comprehensive evaluation. Additionally, the system was designed with modularity in mind, streamlining the addition or removal of guidelines. Because of this flexibility, the evaluation process can adapt quickly to new requirements or changes in educational standards.

By generating detailed, structured feedback for each question, including numerical ratings, concise summaries, and in-depth reasoning, the system provides invaluable insights for continual improvement. This level of detail allows for a nuanced understanding of how well each question aligns with the established criteria, offering possibilities for targeted enhancements to the question generation process.

Some quantitative aspects of question quality proved challenging for the AI to consistently evaluate. For instance, the team noticed that correct answers were often longer than incorrect ones, making them straightforward to identify. To address this, they developed a custom algorithm that analyzes answer lengths and flags potential issues without relying on the LLM’s judgment.

Beyond evaluating individual questions, the system also assesses the entire set of questions for a given piece of content. This step checks for duplicates, promotes diversity in the types of questions asked, and verifies that the set as a whole provides a comprehensive assessment of the learning material.

One key component of the pipeline is the intelligent revision module. This is where the iterative improvement happens. When the evaluation process flags issues with a question—whether it’s a guideline violation or a structural problem—the question is sent back for revision. The AI model is provided with specific feedback on how it can address the specific violation and directs it to fix the issue by revising or replacing the QA.

The whole pipeline goes through multiple iterations until the question aligns with all of the specified quality standards. If after several attempts a question still doesn’t meet the criteria, it’s flagged for human review. This iterative approach makes sure that the final output isn’t merely a raw AI generation, but a refined product that has gone through multiple checks and improvements.

Throughout the development process, the team maintained a strong focus on iterative tracking of changes. They implemented a unique history tracking system so they could monitor the evolution of each question through multiple rounds of generation, evaluation, and revision. This approach not only provided valuable insights into the AI model’s decision-making process, but also allowed for targeted improvements to the system over time. By closely tracking the AI model’s performance across multiple iterations, we were able to fine-tune our prompts and evaluation criteria, resulting in a significant improvement in output quality.

With EBSCOlearning’s vast content library in mind, the team built scalability into the core of their solution. They implemented multithreading capabilities, allowing the system to process multiple pieces of content simultaneously. They also developed sophisticated retry mechanisms to handle potential API failures or invalid outputs so the system remained reliable even when processing large volumes of content.

By combining these components—intelligent generation, comprehensive evaluation, and adaptive revision—EBSCOlearning and the GenAIIC team created a system that not only automates the process of creating assessment questions but does so with a level of quality that rivals human-created content. This pipeline represents a significant leap forward in the application of AI to educational content creation, promising to revolutionize how learning assessments are developed and delivered.

The impact of this AI-powered solution on EBSCOlearning’s assessment creation process has been nothing short of transformative. Feedback from EBSCOlearning’s subject matter experts has been overwhelmingly positive, with the AI-generated questions meeting or exceeding the quality of manually created ones in many cases.

Key benefits of the new system include:

“The Generative AI Innovation Center’s automated solution for generating multiple-choice questions and answers considerably accelerated the timeline for deployment of assessments for our online learning platform. Their approach of leveraging advanced language models and implementing carefully constructed guidelines in collaboration with our subject matter experts and product management team has resulted in assessment material that is accurate, relevant, and of high quality. This solution is saving us considerable time and effort, and will enable us to scale assessments across the wide range of skills development resources on our platform.”

—Michael Laddin, Senior Vice President & General Manager, EBSCOlearning.

Here are two examples of generated QA.

Question 1: What does the Consumer Relevancy model proposed by Crawford and Mathews assert about human values in business transactions?

Correct answer: C

Answer explanations:

Overall: The Book Summary states that the Consumer Relevancy model asserts that human values are more important than traditional value propositions and that businesses must recognize this need for human values as the contemporary currency of commerce.

Question 2: According to Sara N. King, David G. Altman, and Robert J. Lee, what is the primary benefit of leaders having clarity about their own values?

Correct answer: B

Answer explanations:

Overall: The Book Summary emphasizes that having clarity about one’s own values allows leaders to make more fulfilling career choices and helps them recognize when they are participating in actions that conflict with their core values.

For EBSCOlearning, this project is the first step towards their goal of scaling assessments across their entire online learning platform. They’re already planning to expand the system’s capabilities, including:

The potential applications of this technology extend far beyond EBSCOlearning’s current use case. From personalized learning paths to adaptive testing, the possibilities for AI in education and professional development are vast and exciting.

The collaboration between EBSCOlearning and the GenAIIC demonstrates the transformative power of AI when applied thoughtfully to real-world challenges. By combining cutting-edge technology with deep domain expertise, they’ve created a solution that not only solves a pressing business need but also has the potential to enhance learning experiences for millions of people. This solution is slated to produce assessment questions for hundreds and eventually thousands of learning paths in EBSCOlearning’s curriculum.

As we look to the future of education and professional development, it’s clear that AI will play an increasingly important role. The success of this project serves as a compelling example of how AI can be used to create more efficient, effective, and engaging learning experiences.

For businesses and educational institutions alike, the message is clear: embracing AI isn’t just about keeping up with technology trends—it’s about unlocking new possibilities to better serve learners and drive innovation in education. As EBSCOlearning’s journey shows, the future of learning assessment is here, and it’s powered by AI. Consider how such a solution can enrich your own e-learning content and delight your customers with high quality and on-point assessments. To get started, contact your AWS account manager. If you don’t have an AWS account manager, contact sales. Visit Generative AI Innovation Center to learn more about our program. Yasin Khatami is a Senior Applied Scientist at the Generative AI Innovation Center. With more than a decade of experience in artificial intelligence (AI), he implements state-of-the-art AI products for AWS customers to drive innovation, efficiency and value for customer platforms. His expertise is in generative AI, large language models (LLM), multi-agent techniques, and multimodal learning.

Yasin Khatami is a Senior Applied Scientist at the Generative AI Innovation Center. With more than a decade of experience in artificial intelligence (AI), he implements state-of-the-art AI products for AWS customers to drive innovation, efficiency and value for customer platforms. His expertise is in generative AI, large language models (LLM), multi-agent techniques, and multimodal learning. Yifu Hu is an Applied Scientist at the Generative AI Innovation Center. He develops machine learning and generative AI solutions for diverse customer challenges across various industries. Yifu specializes in creative problem-solving, with expertise in AI/ML technologies, particularly in applications of large language models and AI agents.

Yifu Hu is an Applied Scientist at the Generative AI Innovation Center. He develops machine learning and generative AI solutions for diverse customer challenges across various industries. Yifu specializes in creative problem-solving, with expertise in AI/ML technologies, particularly in applications of large language models and AI agents. Aude Genevay is a Senior Applied Scientist at the Generative AI Innovation Center, where she helps customers tackle critical business challenges and create value using generative AI. She holds a PhD in theoretical machine learning and enjoys turning cutting-edge research into real-world solutions.

Aude Genevay is a Senior Applied Scientist at the Generative AI Innovation Center, where she helps customers tackle critical business challenges and create value using generative AI. She holds a PhD in theoretical machine learning and enjoys turning cutting-edge research into real-world solutions. Mike Laddin is Senior Vice President & General Manager of EBSCOlearning, a division of EBSCO Information Services. EBSCOlearning offers highly acclaimed online products and services for companies, educational institutions, and workforce development organizations. Mike oversees a team of professionals focused on unlocking the potential of people and organizations with on-demand upskilling and microlearning solutions. He has over 25 years of experience as both an entrepreneur and software executive in the information services industry. Mike received an MBA from the Lally School of Management at Rensselaer Polytechnic Institute, and outside of work he is an avid boater.

Mike Laddin is Senior Vice President & General Manager of EBSCOlearning, a division of EBSCO Information Services. EBSCOlearning offers highly acclaimed online products and services for companies, educational institutions, and workforce development organizations. Mike oversees a team of professionals focused on unlocking the potential of people and organizations with on-demand upskilling and microlearning solutions. He has over 25 years of experience as both an entrepreneur and software executive in the information services industry. Mike received an MBA from the Lally School of Management at Rensselaer Polytechnic Institute, and outside of work he is an avid boater. Alyssa Gigliotti is a Content Strategy and Product Operations Manager at EBSCOlearning, where she collaborates with her team to design top-tier microlearning solutions focused on enhancing business and power skills. With a background in English, professional writing, and technical communications from UMass Amherst, Alyssa combines her expertise in language with a strategic approach to educational content. Alyssa’s in-depth knowledge of the product and voice of the customer allows her to actively engage in product development planning to ensure her team continuously meets the needs of users. Outside the professional sphere, she is both a talented artist and a passionate reader, continuously seeking inspiration from creative and literary pursuits.

Alyssa Gigliotti is a Content Strategy and Product Operations Manager at EBSCOlearning, where she collaborates with her team to design top-tier microlearning solutions focused on enhancing business and power skills. With a background in English, professional writing, and technical communications from UMass Amherst, Alyssa combines her expertise in language with a strategic approach to educational content. Alyssa’s in-depth knowledge of the product and voice of the customer allows her to actively engage in product development planning to ensure her team continuously meets the needs of users. Outside the professional sphere, she is both a talented artist and a passionate reader, continuously seeking inspiration from creative and literary pursuits.

Loading comments…

website SEOWebsite Traffic