AI face swap video has moved well beyond the novelty deepfake territory it occupied just a few years ago. What was once a technology associated primarily with entertainment and, unfortunately, misinformation is now a core capability in professional content production—used by brands, agencies, and independent creators to produce personalised video content at scale without requiring on-camera talent for every variant. Understanding how modern AI face swap technology works, where it is genuinely useful, and what quality standards matter for professional applications clarifies a capability that is rapidly becoming a standard component of AI-augmented content workflows.

Face swap technology in video works by detecting and mapping a source face—either from a photo or a reference video—and applying it to a target face in existing footage. Earlier systems used landmark-based detection, identifying key facial points (eyes, nose, mouth, jawline) and warping the source face to align with the target’s geometry. Results were often unconvincing: inconsistent skin tone blending, edge artefacts around the hairline, and poor handling of motion or expressive facial movements were common failure modes.

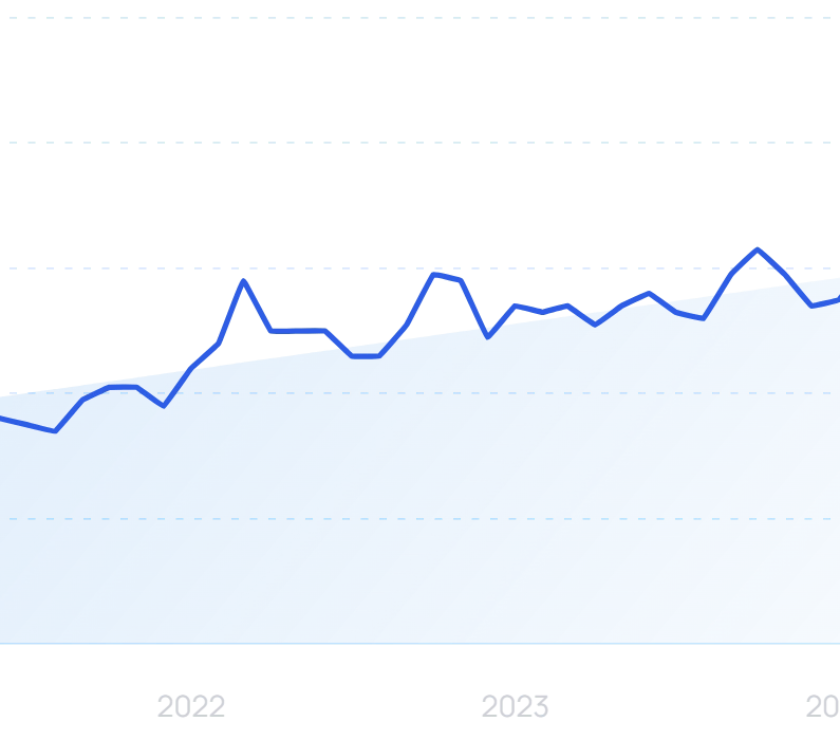

Modern AI face swap systems apply much more sophisticated approaches, typically using neural network architectures trained on large datasets of facial images and video sequences. These systems learn to reconstruct facial appearance with natural skin texture, consistent colouring, and appropriate response to scene lighting. The output quality gap between 2020 and 2025 face swap technology is significant enough that outputs from leading contemporary systems are routinely difficult to distinguish from genuine footage at normal viewing distances and resolutions.

The commercial applications of AI face swap video span several categories, each with distinct quality requirements and production workflow implications.

One of the most commercially relevant applications is the ability to take a branded AI avatar or virtual influencer and apply it to diverse video content without re-generating each video from scratch. A creator or brand that has established a digital character can apply that character’s face to product demonstration videos, testimonial formats, or social content templates—maintaining consistent brand identity across content that would otherwise require multiple production cycles. This workflow dramatically reduces per-asset production time and cost while preserving the visual consistency that gives a virtual influencer its commercial value.

Agencies producing video content for multiple markets often need regional or demographic variants of the same video—same script, different presenter. AI face swap enables the production of these variants without scheduling and filming multiple talent sessions, particularly when combined with voice dubbing or AI voice synthesis. The economic case for this application is compelling: production costs that previously scaled linearly with the number of variants can instead be reduced to a largely fixed post-production cost per base video.

Customer testimonials and case study videos often face a challenge: customers are willing to share their experience in writing but unwilling to appear on camera. AI face swap allows the production of convincing video testimonials using consenting individuals as on-camera talent while applying a distinct digital face, protecting the actual customer’s identity while preserving the format’s persuasive impact. This application requires careful implementation and appropriate disclosure but represents a legitimate professional use case that video production teams have adopted.

AI face swap technology splits into two operational modes that serve distinct production contexts: real-time face swap, which processes video frames live during recording or streaming, and post-production face swap, which applies facial replacement to pre-recorded footage as a rendering task.

Real-time AI face swap is used primarily in live streaming, video conferencing, and interactive content. The latency constraints are severe—each frame must be processed and replaced faster than it can be perceived as a delay—which places significant demands on both the AI model architecture and the underlying hardware. Quality trade-offs are inherent; real-time systems typically sacrifice some realism for processing speed, particularly in edge handling and lighting adaptation.

Post-production face swap operates without these latency constraints, allowing significantly higher quality outputs. The AI model has full access to the complete video sequence, enabling it to analyse motion patterns, optimise skin tone blending across the full scene, and ensure temporal consistency throughout. For professional content production—brand videos, marketing campaigns, localisation variants—post-production AI face swap is the relevant mode, and it is here that quality differences between platforms are most visible.

Understanding which mode a platform prioritises clarifies its intended use case. A face swap app optimised for live streaming performance may produce noticeably worse results in post-production contexts than a platform designed specifically for rendered output quality, and vice versa.

Not all AI face swap systems perform equally across the quality dimensions that matter for professional deployment. Evaluating a platform requires understanding which quality metrics to assess.

Still images rarely reveal the weaknesses in a face swap system. Video exposes them immediately: flickering, inconsistent skin tone frame-to-frame, and changes in apparent age or facial structure between frames create an uncanny valley effect that breaks the illusion entirely. Temporal consistency—the capacity to maintain consistent facial appearance across every frame of a video sequence—is the foundational quality requirement for professional face swap video.

The hairline boundary is where many face swap systems degrade. Natural hair has complex transparency and fine structure that is difficult to blend convincingly with a swapped face, particularly when the source and target have different hair colours or styles. Systems that handle hairline boundaries naturally—rather than producing a visible seam—produce significantly more convincing results in practice.

A face swap that cannot accurately transfer the emotional range of the original performance is commercially limited. Surprised, laughing, or animated expressions test whether a system’s face mapping holds up under non-neutral conditions. Professional applications require that the swapped face read as naturally expressive, not frozen or artificially constrained to a neutral range.

Face swap is increasingly offered as a component within broader AI content creation platforms rather than as a standalone tool. This integration matters because standalone face swap tools produce isolated outputs that then require integration with other content types—images, videos, lipsync audio, social templates—to be useful in a production workflow. Platforms that combine face swap with character-consistent image generation, video production, and lipsync within a single environment enable the end-to-end workflows that professional creators actually need.

nerdAmong platforms that have built this integrated model, RYLA AI combines face swap capability with photo studio generation, video creation, and lipsync into a unified content production environment. The platform’s emphasis on 100% face consistency—maintaining the same character identity across photo, video, and faceswap outputs—addresses the core challenge that makes face swap operationally viable for brand and influencer content: the ability to apply a character’s identity reliably across diverse content formats. With a creator community of over 10,000 users and more than 2 million generated images, RYLA AI’s integrated approach reflects the direction the professional AI content creation market has taken.

Traditional video editing tools—even high-end compositing software—approach face replacement as a manual masking and compositing task. An editor isolates the face region, applies colour grading to match skin tones, and manually tracks the replacement across frames. At professional quality, this process can take hours per minute of footage and requires specialist technical skill. The output is only as good as the editor’s patience and expertise, and it rarely holds up to close scrutiny in motion.

AI-powered face swap video automates every component of this workflow using deep learning models trained on large-scale facial data. Face detection, landmark mapping, appearance transfer, lighting adaptation, and temporal smoothing all happen within the model’s inference pass. What took hours in a compositing suite can be completed in minutes, often with superior results on naturalness metrics that matter to audiences: skin texture fidelity, eye contact, and the handling of motion blur on facial edges.

The deepfake detection field has developed in parallel with face swap technology, producing both research tools and commercial services that evaluate synthetic media authenticity. For professional content teams, this creates both an obligation—to produce outputs that meet disclosure requirements and can withstand scrutiny—and a quality benchmark. Outputs that pass detection thresholds set by leading deepfake detection tools represent the practical quality standard for professional deployment.

AI-generated video content, including face swap video, is increasingly subject to content authentication standards. The Content Authenticity Initiative and similar frameworks are developing metadata standards that allow AI-modified content to carry provenance signals—a development that will shape how professional content teams document and disclose synthetic media in their production pipelines.

Any professional use of AI face swap video requires attention to disclosure and consent. Industry practice has moved toward explicit labelling of AI-generated or AI-modified content in many publishing contexts, and platform terms of service for major social networks increasingly require disclosure of AI video manipulation. The legal landscape around synthetic media continues to evolve, with several jurisdictions implementing or consulting on disclosure requirements for AI-generated video content.

The commercial applications described above—brand avatar content, localisation variants, privacy-protecting testimonials—are legitimate uses that can be executed responsibly with appropriate disclosure. Applications involving deceptive impersonation of real individuals are both ethically problematic and increasingly legally exposed. Professional content teams should establish clear internal guidelines for AI face swap use that align with applicable platform policies and emerging regulatory requirements.

AI face swap video has matured from a technical curiosity into a practical production capability that addresses real challenges in brand content creation, campaign localisation, and workflow efficiency. The quality gap between current leading systems and earlier generations is substantial enough that professional-grade face swap is now accessible to creators without specialist technical resources. For content teams evaluating the capability, the assessment criteria are clear: temporal consistency, edge handling, and integration with broader content workflows are the factors that determine whether a face swap tool becomes a production asset or remains an experimental novelty.

Here at Nerdbot we are always looking for fresh takes on anything people love with a focus on television, comics, movies, animation, video games and more. If you feel passionate about something or love to be the person to get the word of nerd out to the public, we want to hear from you!

None found

Type above and press Enter to search. Press Esc to cancel.

AI Search